Crowd-Proofing Live Contribution at Stadiums and Festivals

Summary

It doesn’t matter whether you’re at a packed stadium, a multi-stage festival, or covering the story from the streets outside – wherever tens of thousands of people gather with smartphones, the network behaves differently. Solving it isn’t about one magic setup. It’s about matching the right connectivity pattern to each position, understanding where baseline bonding reaches its limits, and knowing what tools are now available when conditions shift mid-broadcast. This article breaks down the patterns that work and where each one applies.

2026 is the heaviest live production year in recent memory. The world football championship across the US, Canada and Mexico. Wimbledon. The Commonwealth Games in Glasgow. The Asian Games in Aichi-Nagoya. Major music festivals drawing hundreds of thousands across multiple days. Street events and carnivals on the scale of Rio. For broadcast engineers covering these events, crowded venue contribution isn’t a hypothetical – it’s the job, and the venues are going to be packed.

Table of Contents

It worked in testing. Then the crowd arrived.

Every broadcast engineer who has worked a major stadium event knows the scenario. The venue is empty, the setup is clean, and every modem shows strong signal. Testing runs beautifully. Then the gates open.

The same is true at festivals, parades, and large-scale outdoor events. Whether it is a packed arena, a multi-stage music festival, or a street event like the Rio Carnival – wherever tens of thousands of people gather with smartphones, the cellular environment changes fundamentally the moment the crowds arrive.

Within an hour, the cell sectors serving the venue are carrying the data traffic of tens of thousands of fans simultaneously sharing video, posting updates, and streaming content. Uplink congestion – often more severe than download congestion because everyone is pushing data at once – competes directly with your contribution stream. Full bars, zero throughput. In the most extreme conditions, it is the failure mode that’s hardest to plan around. This is not a sign the equipment is broken. It is a structural property of how shared cellular networks behave under mass simultaneous demand.

The problem escalates in large venues. Tiered concrete-and-steel bowl architecture was not designed with RF propagation in mind. Macro cell signals that look strong outside the venue often struggle to penetrate seating areas. Meanwhile, the venue’s own Distributed Antenna System (DAS) infrastructure – if it has one – may be designed for fan connectivity, not broadcast-grade uplink. Weather, unexpected RF interference from temporary production equipment, and venue access restrictions add further variables that are impossible to test in advance.

The challenge does not stop at the venue perimeter. News crews and ENG operators covering the event from fan zones, public viewing areas, and city streets outside the stadium face identical congestion conditions – with none of the venue infrastructure access that a rights-holder production team might have.

There is no setup that eliminates this risk entirely. But there are patterns that reduce it significantly – and an emerging generation of intelligent bonding technology that changes what is achievable when conditions shift unexpectedly during a live broadcast.

Live network testing at a major football stadium captures the pattern clearly. During normal play, drop events are infrequent and recoverable. At a goal, the rate accelerates with roughly 5–8 incidents in under 10 minutes and an average 45–50% drop in bitrate. By half-time, the network deteriorates into 20–30 drop events per 15-minute window, with bitrate falling up to 85–90% from baseline.

What Is the Best Connectivity Strategy for Stadium and Festival Coverage?

A common mistake in pre-production planning is to treat crowd-proofing as a problem with a single correct solution. In practice, the right approach depends on the position type, the venue, the production scale, and the connectivity options available at each specific location.

Most robust real-world setups combine elements from several different patterns. Understanding when each pattern applies – and its limits – is more useful than looking for one solution that works everywhere.

Pattern 1: Encoder in a Clean Connectivity Zone

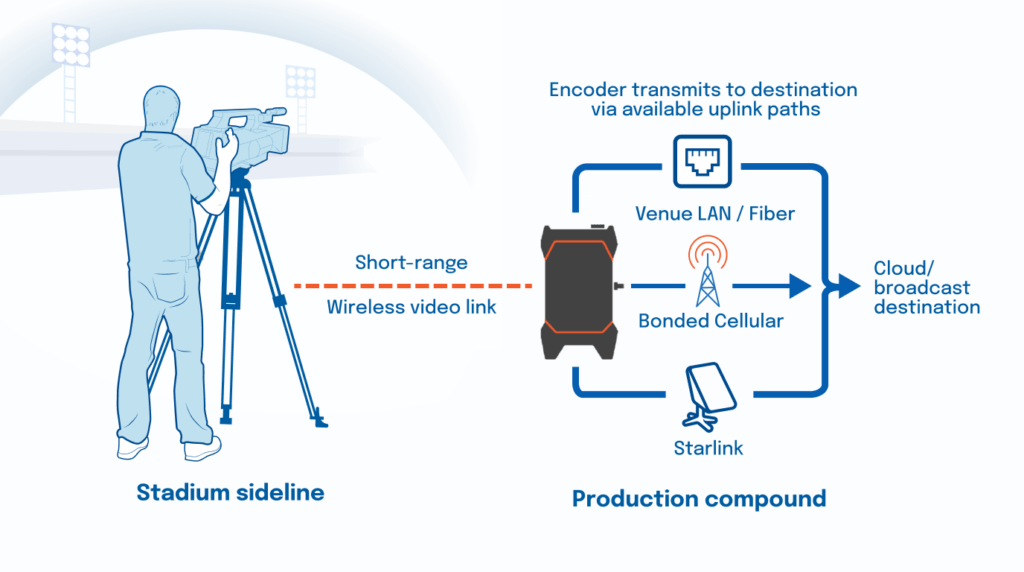

The first pattern involves separating the encoder from the camera when the camera position has poor or unreliable connectivity, but a cleaner connectivity zone is accessible nearby.

The camera stays where the pictures are best – pitch-level, in the crowd, at a roaming position. The video signal travels from the camera to a separate encoder via a short-range wireless video link or a longer cable run. The encoder sits in a position where connectivity is significantly better: a production compound, an elevated position above the congested crowd sector, a nearby building or venue infrastructure point, or even a business or facility adjacent to the venue with accessible internet.

This pattern is practical for a few reasons. First, getting a reliable LAN or fiber drop to a fixed encoder position in a compound is often far easier and cheaper than running that infrastructure to a pitch-side camera. Second, the encoder in a cleaner zone may have access to multiple operator antennas, wired internet, or Starlink, rather than fighting for signal from the same congested sector as everyone else in the crowd.

The tradeoff is operational complexity. You are now managing a wireless video link as well as the uplink itself. The short-range video path adds a point of failure and requires careful placement to maintain line of sight or adequate signal. Teams need to plan and test this link as carefully as the uplink.

This pattern works best for fixed camera positions where the camera is not moving significantly during the event, and where the compound or encoder position has genuinely better connectivity than the camera location.

Pattern 2: Encoder with the Camera (and when does intelligent bonding make a difference)

For any mobile camera operator working in or around a crowded venue – separating the encoder from the camera is often impractical. The encoder travels with the operator, contributing directly from wherever the camera is pointing.

This is the most common pattern in live sports and festival coverage – and equally the reality for news teams covering the story from the streets and fan areas around it. It is also the pattern most exposed to the unpredictable connectivity conditions of a dense crowd, because the encoder is always in the middle of the congestion rather than above or outside it.

Bonded cellular is essential here. Using multiple SIMs from different operators means that if one carrier’s sector degrades, the others continue to carry the load. The LRT protocol – the foundation of LiveU’s bonded IP contribution – handles packet ordering, dynamic error correction, and adaptive bitrate management across all active paths, keeping the stream on air even as individual connections fluctuate.

However, baseline bonding works with a fixed, pre-selected set of operators. When a goal is scored in a packed stadium or a headline act takes the stage at a festival, one or more carriers in the area may experience a sudden bandwidth drop as thousands of devices push to upload simultaneously. Conventional bonding continues to aggregate whatever bandwidth remains across all pre-selected operators – including the degraded one – because it has no mechanism to replace it with a better alternative.

This is where LiveU IQ (LIQ™) makes a meaningful difference.

LIQ is an AI-driven layer that sits on top of LiveU’s bonded IP contribution. Rather than being locked to a fixed set of pre-selected operators, LIQ dynamically switches eSIMs between carriers in real time, based on which networks are actually performing best at the encoder’s current location and time. It draws on a combination of live network data, historical transmission logs from hundreds of thousands of SIM sessions, and a cloud-based decisioning engine to make those switching decisions on-the-fly. The LU900Q is the first LiveU encoder with native LIQ support – purpose-built for the mobile, field-level positions where intelligent operator selection matters most.

The practical result: when Carrier A’s local sector begins to degrade, LIQ identifies that Carrier B or Carrier C is still performing well and shifts connections dynamically – before the stream is affected. The underperforming operator is effectively replaced, not just tolerated. The production team does not need to manually monitor each modem, diagnose which operator is struggling, and intervene. The system thinks and adapts during the transmission.

At the 2026 Winter Games – the first large-scale global deployment of LIQ – around 60% of supported sessions used LIQ, with those sessions achieving over 36% higher average bitrates. 980+ LiveU units were deployed by broadcasters from 37 countries across crowded arena venues and remote mountain locations. Broadcasters were able to rely on IP contribution as a primary workflow for 4K and HDR coverage across nearly 12,000 live sessions.

This is not a marginal improvement for edge cases. At major events where peak public network usage moments are entirely predictable – goals, halftime, headliners, finishes – having a system that anticipates and adapts to those moments is the difference between consistent broadcast-quality output and managing degraded feeds under pressure.

Pattern 3: Fixed Positions on Wired Connectivity

Not every camera position requires mobility. Commentary booths, presentation positions, wide cameras at fixed points, and broadcast positions within permanent venue infrastructure are often better served by wired connectivity than by cellular bonding.

Where venue fiber or ethernet is accessible, it is typically the most stable and predictable option. It is not subject to cellular congestion, does not depend on SIM management, and provides consistent throughput for the duration of the event.

Even at fixed positions, bonded IP adds value in several ways:

- Event infrastructure resilience: venue LAN and fiber are not immune to failure – cable damage, patch panel faults, and venue IT issues can take a wired connection down mid-broadcast. IP bonding provides an automatic fallback that keeps the feed on air without manual intervention.

- Least-cost bonding: a LiveU encoder uses wired ethernet as the primary path while keeping cellular available as automatic backup if the wired connection drops

- Starlink and other LEO satellite integration: for positions where running permanent fiber is impractical, LiveU units support bonding Starlink (or other LEO connectivity solution) as one path alongside cellular and ethernet – providing more resilience than Starlink alone

- Cost-effective flexibility: bonded IP is often more practical than negotiating dedicated satellite time for positions that only occasionally need high-throughput coverage

For most fixed positions, the planning question is straightforward: is venue fiber available and practical? If yes, use it as the primary path. If not, evaluate whether bonded cellular, a LEO satellite path, or a combination provides the throughput and redundancy the position needs.

Comparing the Uplink Approaches

The table below summarizes the three approaches by strengths, limitations, and operational implications at high-density events.

| Approach | Strengths | Limitations | Best Suited For |

|---|---|---|---|

| Baseline IP bonding | Reliable in moderate congestion; multiple paths provide redundancy; lower cost than satellite; widely deployed | Works with a fixed set of pre-selected operators; if one operator degrades, bonding continues to use whatever bandwidth remains on that carrier rather than replacing it; limited visibility into which path is actually performing | General field use; events with manageable crowd density; backup alongside wired |

| Intelligent IP bonding (LiveU with LIQ) |

AI-driven operator selection in real time; dynamically replaces underperforming operators with better alternatives; adapts to peak congestion events; uses historical and live network data; reduces manual intervention during transmission | Requires LiveU data and eSIMs; performance gains depend on operator diversity in the area | High-density sports and festival environments; positions requiring mobility; any scenario where operators may degrade unpredictably |

| Fixed / dedicated connectivity (Fiber, venue LAN, or Starlink and other LEO solutions as supplement) |

Predictable throughput at fixed positions; not affected by cellular congestion; no SIM management needed | Not available everywhere; venue fiber can be expensive or impractical at sideline or pitch-level positions; LEO satellite has latency variability and requires line of sight | Commentary booths; presentation positions; wide cameras at fixed locations; production compounds |

Practical Planning Checklist

The following checklist is not a guarantee of success – it is a set of checkpoints that reduce the likelihood of surprises. Adapt it to the specifics of each event and venue.

Before the event

- Identify the crowd pressure points: which areas of the venue will have the highest fan density, and which cell sectors serve those areas

- For events with significant coverage outside the venue – fan zones, public viewing areas, surrounding streets – map those positions separately. They share the same congested cell sectors with none of the venue infrastructure access.

- Map your camera positions against those pressure points – which positions are in the crowd, which are elevated or outside it

- Identify potential clean connectivity zones: production compounds, elevated positions, adjacent buildings or facilities with accessible internet

- Assess wired access for fixed positions: which positions can use venue LAN or fiber, and at what cost and complexity

- Decide which pattern fits each position – mobile camera with encoder attached, encoder separation, or fixed wired – before arrival on site

- Plan antenna placement and confirm equipment physical security and power at each position

- Check whether the venue has its own in-building antenna network (DAS) and whether operator priority access is available for broadcast credentials

During testing and rehearsal

- Test during realistic load conditions if any opportunity exists – an earlier session, a public rehearsal, or any time the venue is partially occupied

- Validate end-to-end stream stability and latency for each position under test conditions, not just signal strength

- Confirm that short-range wireless video links (where used) maintain quality as other RF sources are active in the venue

- Identify which operator performs best at each position during testing – this informs pre-event configuration and gives baseline reference data. LiveU Analytics can also surface this information across past sessions at the same venue.

On the day

Validate all paths before the crowd arrives – this is the only window with clean conditions. Monitor contribution performance centrally during the event and keep fallback options ready for critical positions. For setups using LIQ, the system handles operator switching dynamically during peak moments; focus monitoring on session health rather than individual modem indicators.

The Bottom Line: Bonding Is the Baseline. Intelligence Is the Edge.

Crowd-proofing means reducing risk – not eliminating it. Some events still require dedicated fiber circuits or satellite as the primary path for tier-1 feeds, and that remains the right choice in some contexts. Weather, unexpected RF interference, and last-minute position changes are variables that no planning process can fully anticipate.

Cellular bonding changed live contribution. It broke the dependency on satellite trucks and fixed fiber for mobile, field-level, and multi-angle coverage. For most environments, a well-configured bonded IP setup with diverse operator SIMs delivers reliable contribution at a fraction of the cost of traditional methods.

In the most demanding environments – packed stadiums, major festivals, multi-camera sports events – baseline bonding gets you there. Intelligent operator selection keeps you on air when the crowd peaks. The moments that test production infrastructure hardest are entirely predictable: the goal, the headliner, the finish. Those are the moments when the crowd hits the network simultaneously, and when even a well-configured set of operators can be stretched by simultaneous peak demand.

That’s exactly what LIQ is built for. LiveU’s approach – LRT as the reliable IP transmission foundation, LIQ as the intelligent operator selection layer on top – is designed specifically for those moments. The system operates within the limits of available operator diversity at the location, so knowing which operators have good coverage at a specific venue remains valuable pre-event preparation. But within those conditions, LIQ continuously monitors network performance across a large body of historical and live data, dynamically switching to better-performing operators in real time – removing the reactive burden from production teams and delivering consistency when it matters most.

The practical engineering goal remains unchanged: know your positions, plan your connectivity patterns, test under realistic conditions, and keep fallback options ready. What has changed is that the system itself can now adapt in ways that were not possible with conventional bonded setups – giving production teams a more resilient foundation to work from, whatever the crowd brings.